Selected Research Projects

My research centers on advancing the security, reliability, and trustworthiness of the emerging AI and Large Language Model (LLM) ecosystem. Moving beyond a model-centric perspective, I investigate risks and challenges across the full stack—from models to AI-powered apps and their surrounding ecosystems.

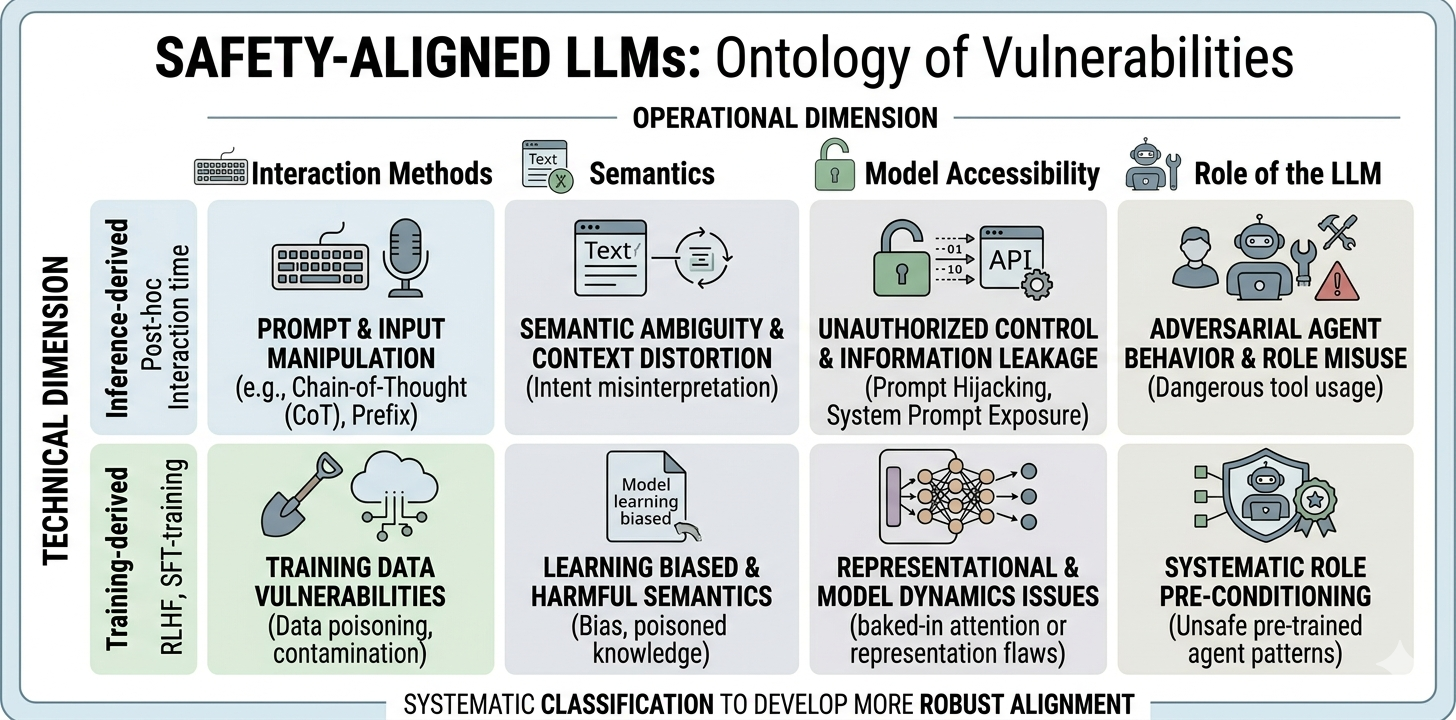

Safety-Aligned LLMs

We study the fundamental limits of safety alignment in modern LLMs, with a particular focus on jailbreak attacks. We first reviewed all the existing study and accordingly proposed a vulnerability-centric framework to systematically understand "why alignment fails". Building on this line of work, we are exploring new directions including representation-level control (e.g., steering vectors) and the security of LLM-based agents, aiming to develop more principled and robust approaches to alignment.

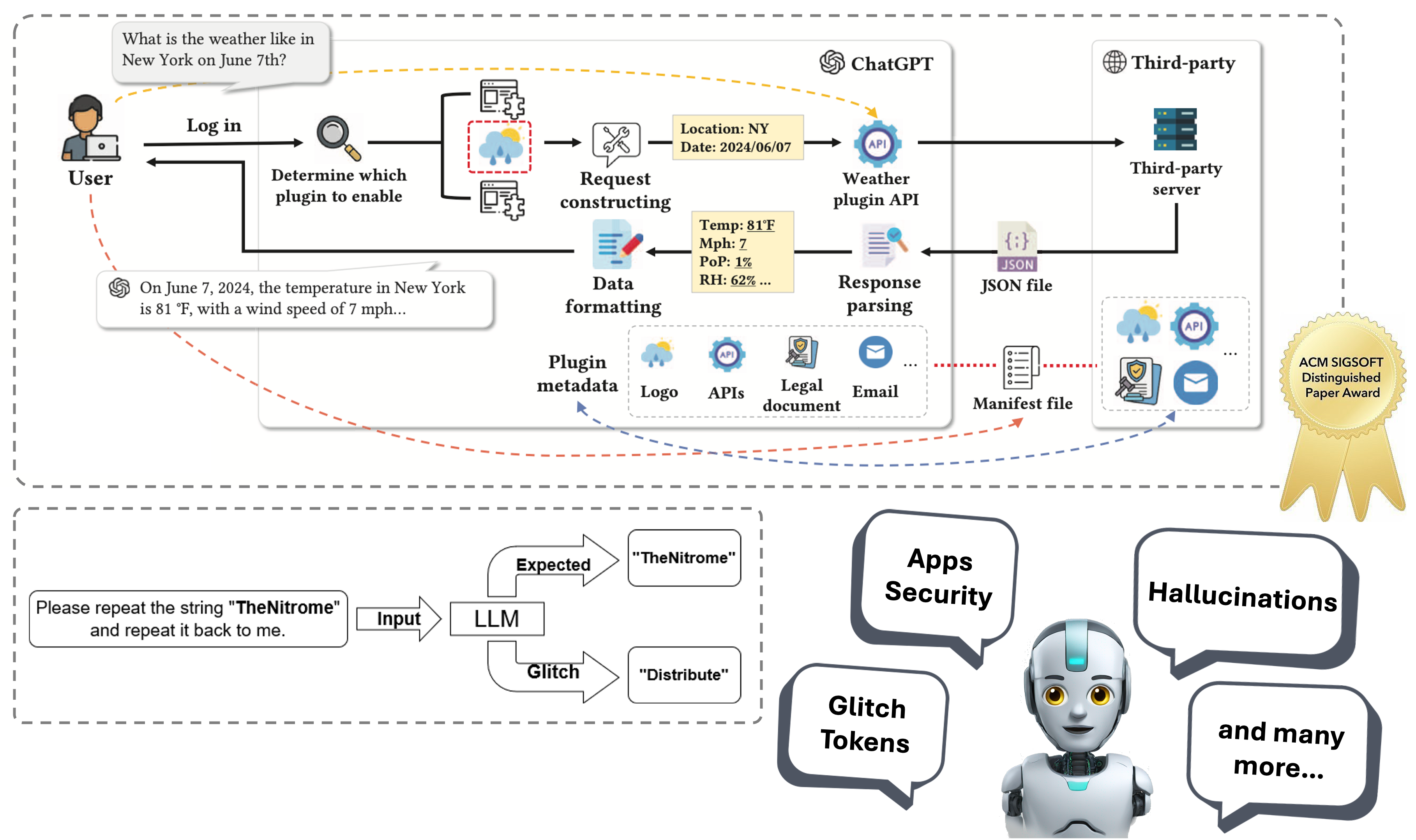

Software Engineering for Large Language Models

A series of ongoing research that aims explore potential security and trustworthiness issues in large language models (LLMs) through the lens of software engineering. We focus on a wide range of issues including apps ecosystem security, glitch tokens, hallucinations, and etc. Our preliminary research has been accepted by ASE 2024 and WWW 2025, and won distinguished paper award.

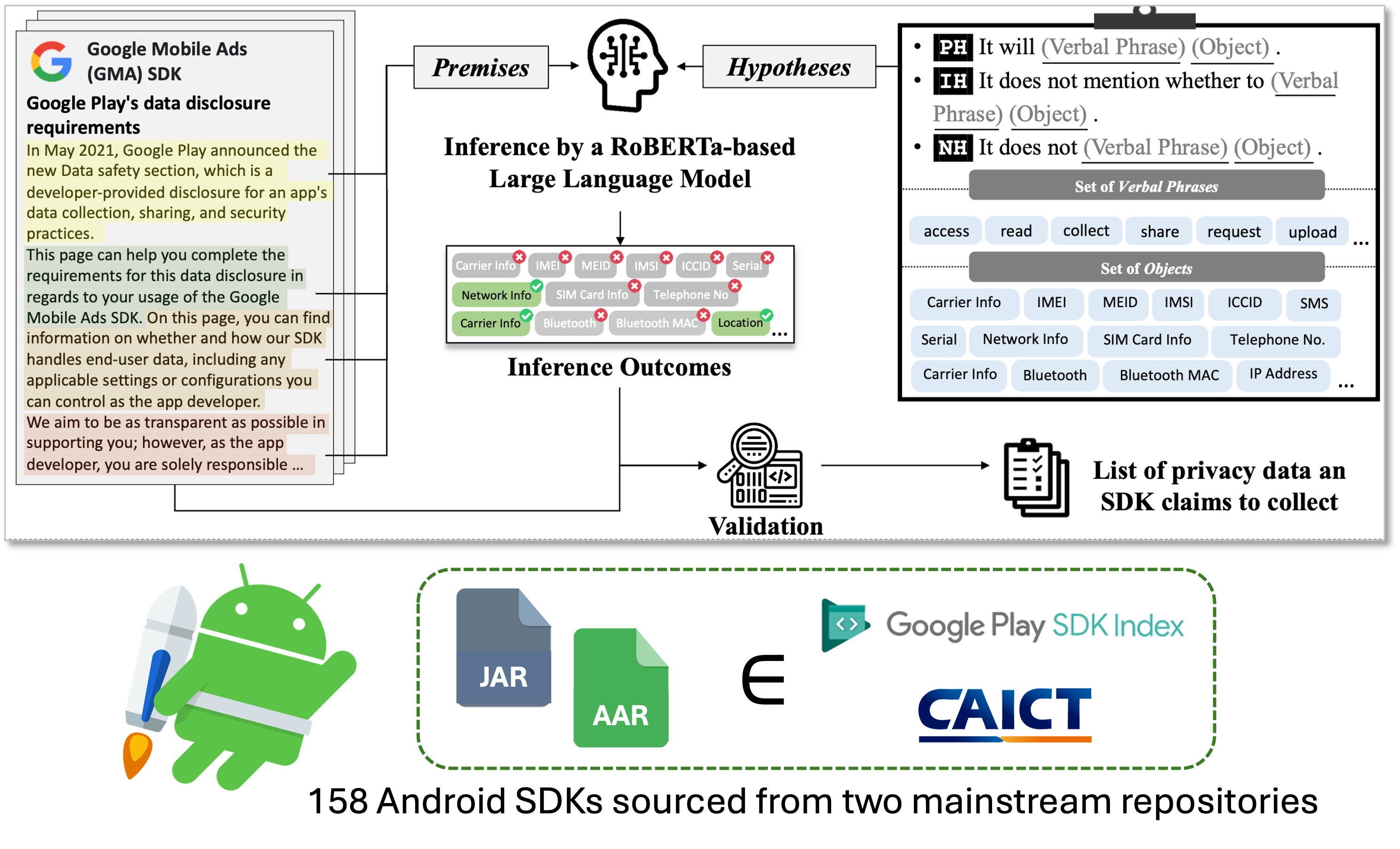

AI for Software Engineering

A series of research that aims to leverage state-of-the-art AI advancement in solving software engineering problems, especially security and privacy issues in application ecosystems of different hardware platforms including mobile and smart home devices. This project is a collaboration with the UQ Trust Lab and ByteDance Security Lab.

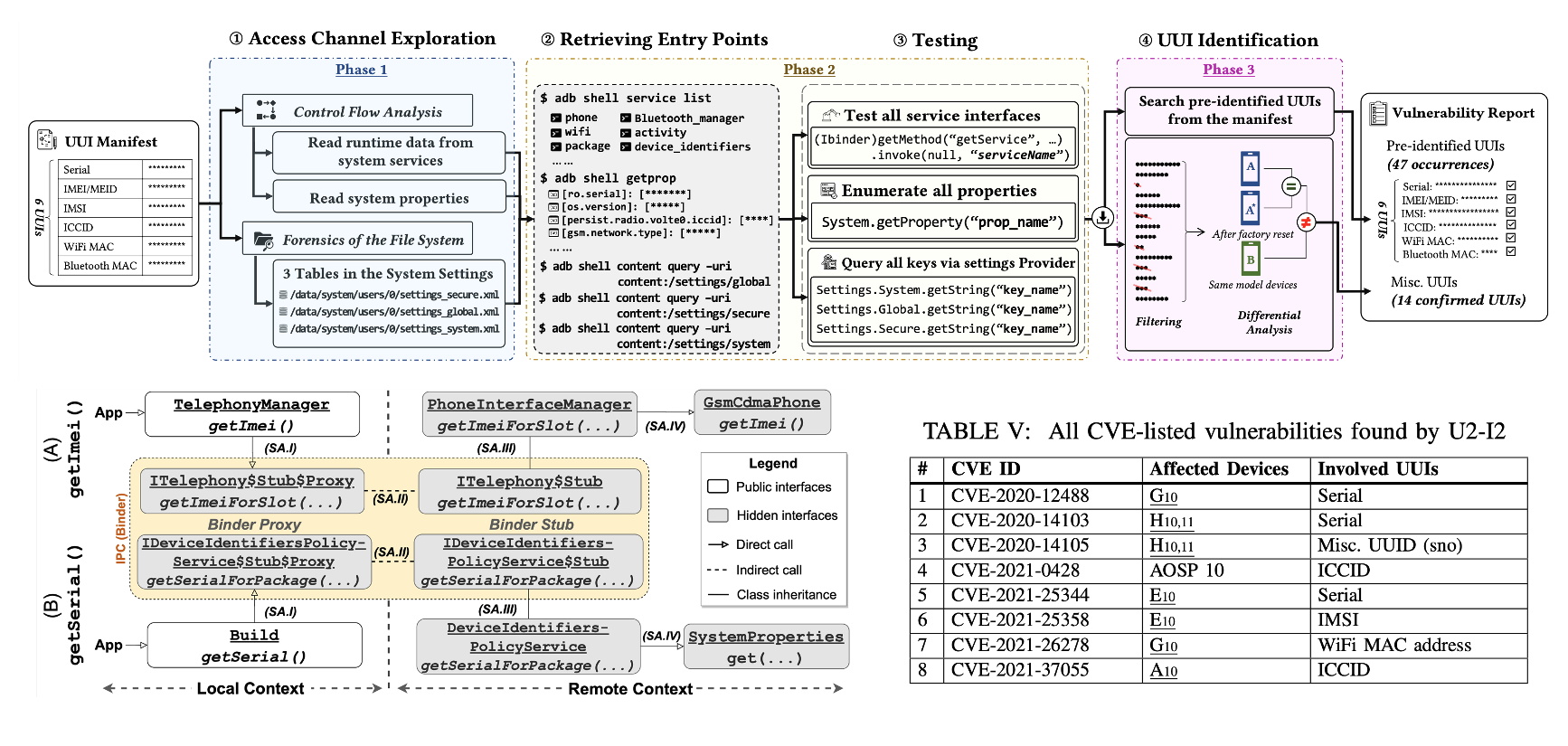

U2-I2: UUI Investigator for Android Smartphones

An one-stop solution to explore user-unresettable identifiers (UUIs) leakage on your Android smartphone. This work is a collaboration with the UQ Trust Lab and ByteDance Security Lab.

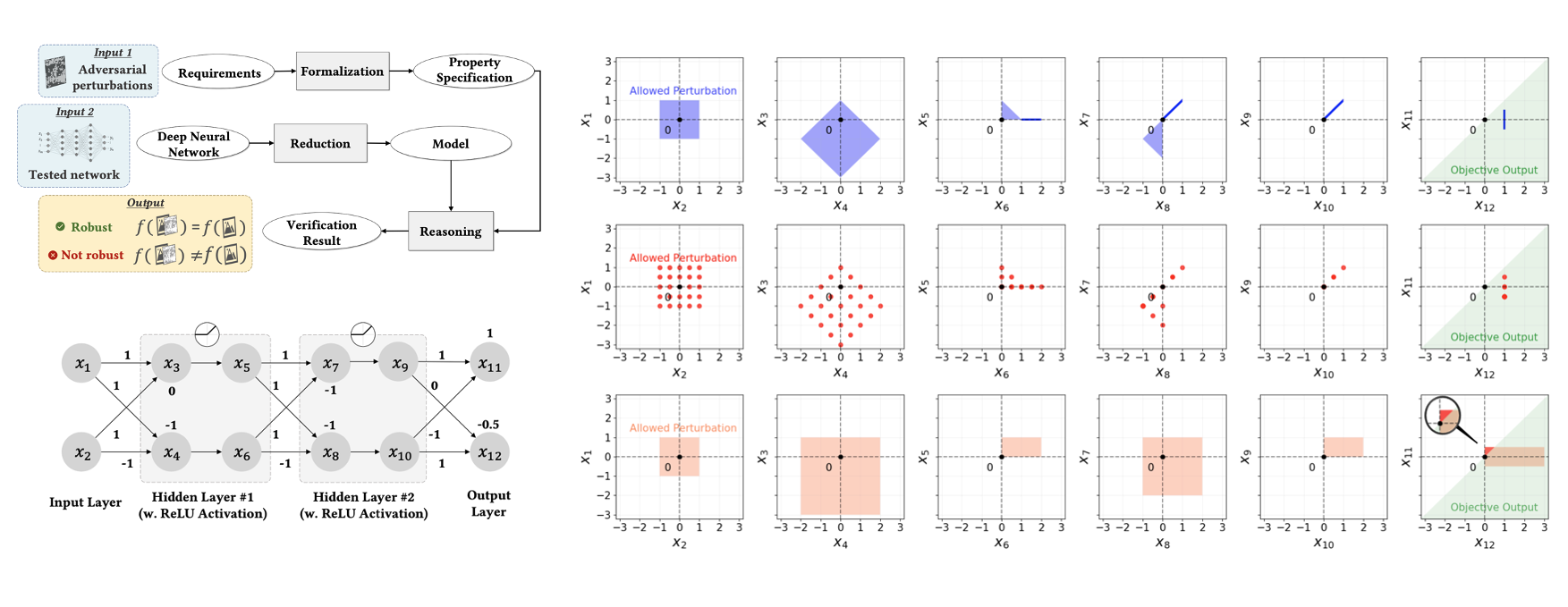

Trustworthy AI & Paoding-DL: an Open-Sourced Python Package for Robustness Preserving Pre-Trained ML Model Optimization

A survey on adversarial robustness of deep neural network and several research studies on robustness-preserving neural network model optimization for both conventional centralized learning and multi-party federated learning.

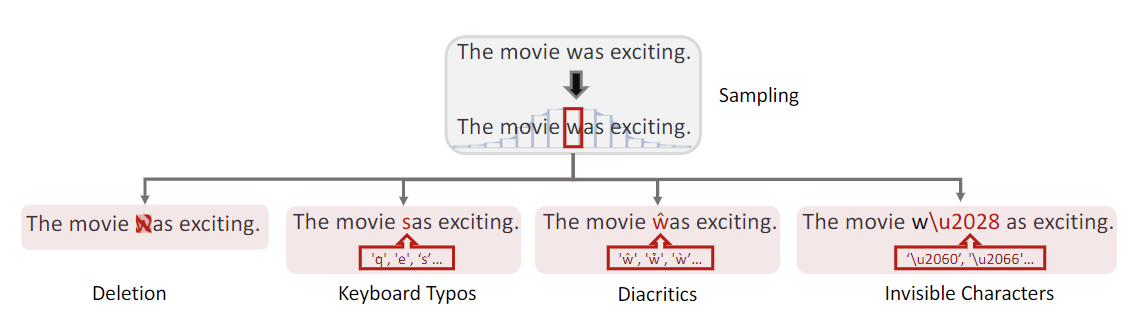

A Formal Approach towards Trustworthy LLMs against Character-Level Purterbations

An exploration research to formally define the metrics of character-level perturbations that may impact the performance of mainstream large language models (LLMs), and an empirical study of the character-level robustness of LLMs. A tool named PdD is proposed and open-sourced by us. This work is a collaboration with the University of Queensland.

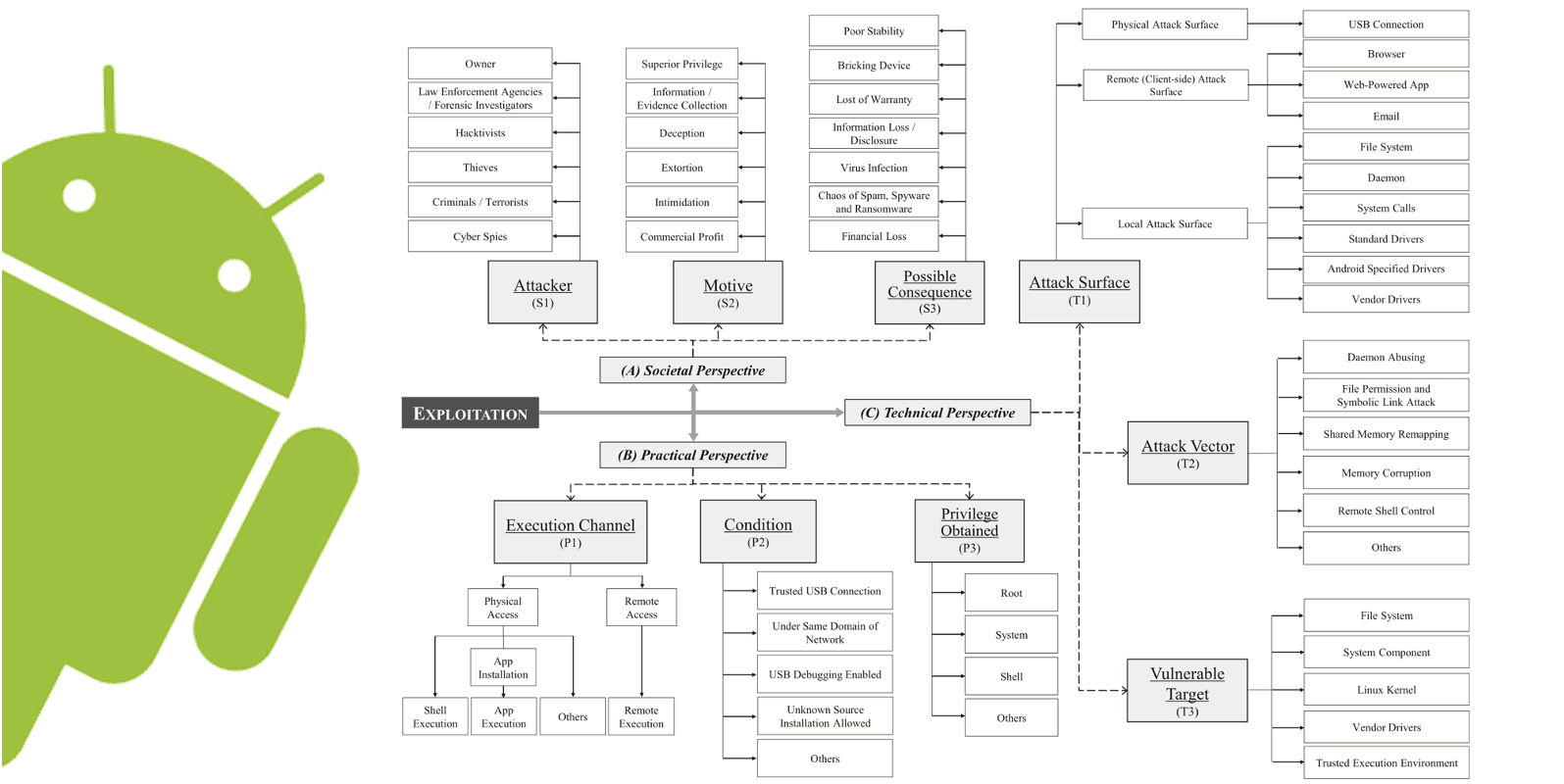

EagleEye: Forensic Analysis for Android Devices and Apps, Exploitation Study, and Priviledge Escalation

A survey on Android exploits and research on Android APK transformation solution covering 8 different obfuscation techniques. This work is under the parent project Cybernite at I2R, A*STAR Singapore.